100% Private • No tracking100% Client-Side•No data leaves your browser•No accounts•Works offline

100% Private • No tracking100% Client-Side•No data leaves your browser•No accounts•Works offline

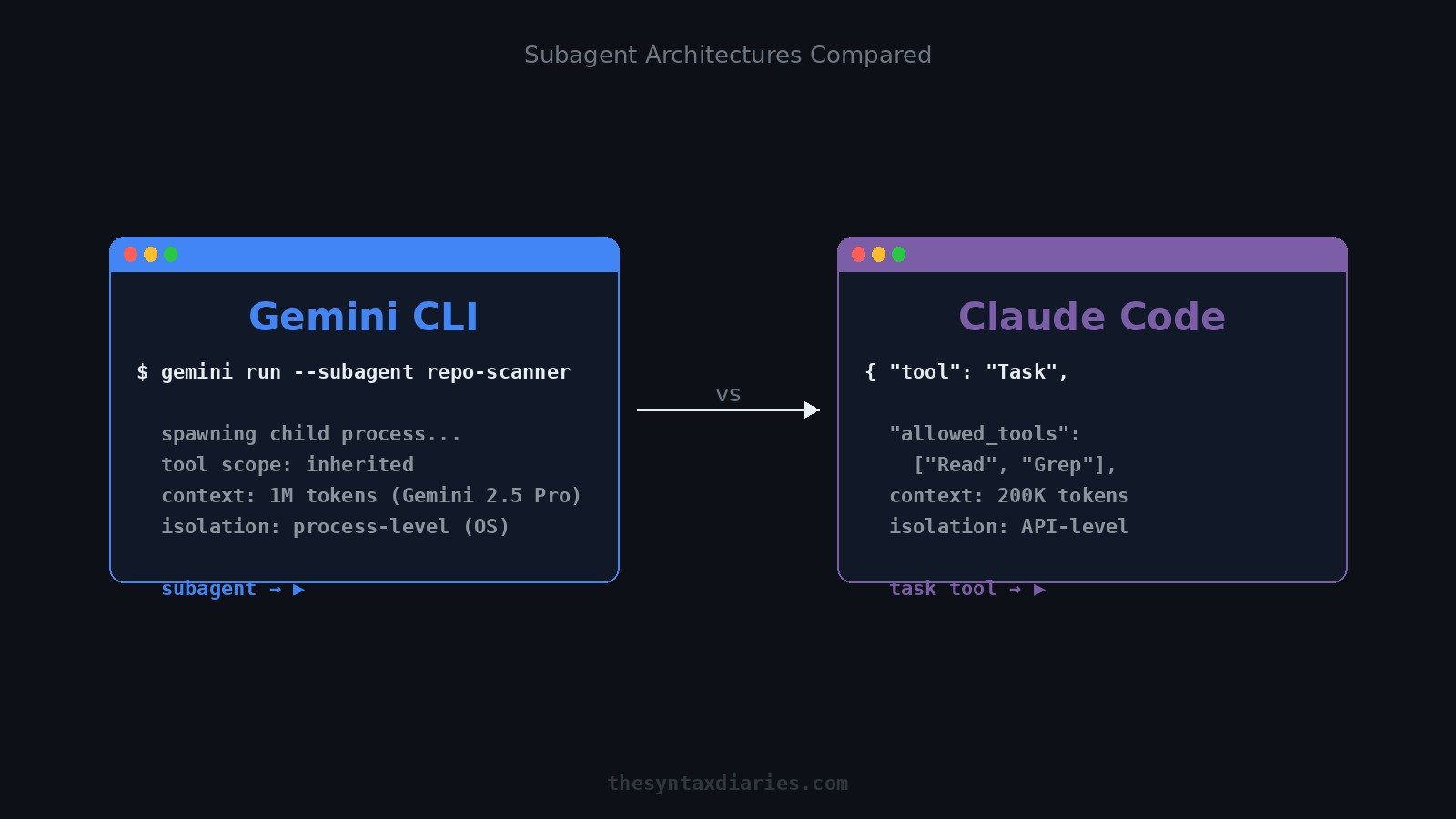

Gemini CLI shipped subagents in April 2026. Here is an architectural comparison with Claude Code covering orchestration, context capacity, and permission controls.

If you've been watching the AI coding tool space, you've probably noticed that "subagents" went from a buzzword to a shipping feature faster than most people expected. Google pushed subagent support in Gemini CLI with v0.1.6 in April 2026, and Claude Code has had its task tool for a while now. Both let you spin up agent-within-agent workflows — but the way they actually do it under the hood is pretty different, and those differences matter when you're deciding which tool to reach for.

This isn't a benchmarks post. It's an architectural comparison so you can make an informed choice without having to dig through changelogs and source code yourself.

When you invoke a subagent in Gemini CLI, what you're actually doing is asking the CLI to spawn a child process. That child process is a fully independent agent instance — it gets its own environment, its own tool scope, and it communicates with the parent via structured JSON handoff messages. The parent agent delegates a task, the child runs it, returns structured output, and the parent aggregates the results.

Here's what a basic Gemini CLI subagent definition looks like in practice:

subagents:

- name: repo-scanner

description: Scans large codebases for security anti-patterns

tools:

- read_file

- list_directory

- search_code

handoff:

input_schema:

type: object

properties:

target_path:

type: string

pattern:

type: string

output_schema:

type: object

properties:

findings:

type: array

summary:

type: string

The parent calls this subagent, passes target_path and pattern, and waits for findings and summary back. Clean, composable, and it uses process-level isolation — meaning each subagent runs in its own OS process with its own memory space.

The big draw here is the 1M token context window that Gemini 2.5 Pro brings. You can point a subagent at an enormous repo and have it do a real sweep without constantly hitting context limits. For large-codebase tasks — security audits, dependency analysis, cross-file refactors — this is genuinely useful.

Claude Code takes a different approach. When you use the task tool to spawn a subagent, you're not launching a new process — you're making an API call that spins up an isolated Claude instance. The orchestrator (your main agent) explicitly grants that instance a set of tools, and the subagent runs within those constraints.

The isolation here is API-level rather than OS-level. That makes spin-up significantly faster — there's no process bootstrapping overhead — but it also means the isolation model is different. You're trusting the API layer rather than the OS process boundary.

A typical Claude Code task tool invocation looks like this:

{

"tool": "Task",

"input": {

"description": "Review the authentication module for SQL injection vulnerabilities",

"prompt": "Examine the files in src/auth/ and identify any SQL injection risks. Return a structured list of findings with file, line, and severity.",

"allowed_tools": ["Read", "Glob", "Grep"]

}

}

Notice the allowed_tools field — this is explicit, per-invocation. The subagent only gets exactly the tools you grant it, nothing more. The orchestrator is always in control of the permission boundary.

Claude Code's context window is 200K tokens, which is substantially smaller than Gemini CLI's 1M. But the task tool is designed around scoping: you point a subagent at specific files or modules, not an entire repo. That tradeoff shapes everything about how you use it.

If you're building out agent workflows and want a solid foundation for the infrastructure layer, the MCP server guide for AI agent workflows covers the tool-routing side of this in detail.

Let's be direct about what the process-level vs API-level distinction actually means for you.

Process-level isolation (Gemini CLI): Each subagent is a real child process. This is heavier — more OS overhead, slower spin-up, more memory — but it gives you strong isolation guarantees. If a subagent crashes or goes haywire, it doesn't take the parent down with it. The tool scope is inherited from the parent by default, which is convenient but less granular.

API-level isolation (Claude Code): Each subagent is an API call. Lighter, faster to spin up, easier to run many in parallel without hammering system resources. The isolation is logical rather than physical — you're relying on the model and API layer to enforce boundaries. But the explicit tool allowlist per invocation gives you fine-grained control over what each subagent can actually touch.

For parallel workloads across a giant repo, Gemini CLI's architecture scales better at the task level. For high-assurance, lower-trust tasks where you need to be certain a subagent can only touch specific files and tools, Claude Code's model is more defensible.

Gemini CLI subagents are stronger when:

Claude Code subagents are stronger when:

This maps to the broader pattern in AI code review in 2026: the tools that win are the ones that match their architecture to the trust model of the task.

This is where the two approaches diverge most significantly, and where I'd push back hardest on Gemini CLI's current design.

By default, Gemini CLI subagents inherit the parent's tool scope. That means if your parent agent has write access to the filesystem, your subagents do too — unless you explicitly restrict them. It's a permissive-by-default model, which is convenient but problematic if you're thinking seriously about gh skill and agent security.

Claude Code inverts this. The orchestrator must explicitly grant tools to each subagent at invocation time. There's no inheritance — a subagent starts with nothing and only gets what you give it. That's a principled approach to least-privilege, and it matters a lot when you're running automated agents against production codebases or sensitive repos.

In practice, this means Claude Code subagents are easier to audit. You can look at a task tool call and know exactly what that subagent could and couldn't do. With Gemini CLI, you need to trace the inheritance chain from parent to child to understand the actual permission surface.

Neither approach is inherently broken, but they represent different philosophies: convenience-first vs safety-first. Know which one your use case requires.

A few patterns I've seen people get wrong when first adopting subagent workflows:

Over-delegating to subagents. Not every task needs a subagent. If the task is small enough to fit in the parent's context and doesn't benefit from isolation, you're adding overhead for no gain. Use subagents when parallelism or isolation actually matter.

Ignoring the context window ceiling. Even with Gemini CLI's 1M token window, you can still hit limits if you're not thoughtful about what you're feeding subagents. And with Claude Code's 200K limit, you need to scope tasks aggressively. Don't assume "big context = no problem."

Trusting inherited permissions in Gemini CLI. Just because a subagent can access a tool doesn't mean it should. If you're using Gemini CLI, explicitly define the tool scope for subagents rather than relying on inheritance. It's more work upfront, but it's much safer.

Treating subagent output as ground truth. Subagent output should be treated as input to the orchestrator's reasoning, not as a final answer. The orchestrator needs to validate, aggregate, and sanity-check what subagents return — especially if you're running many in parallel and aggregating results.

Not considering spin-up cost at scale. If you're orchestrating dozens of subagents, the spin-up cost per agent adds up. Claude Code's API-level isolation is faster per call; Gemini CLI's process-level isolation has more overhead. Profile this early if you're building at scale.

For large-repo sweeps where I need to cover a lot of ground fast and don't have tight permission requirements, I'd reach for Gemini CLI. The 1M context window is genuinely useful, and process-level isolation means subagents are robust even if tasks are messy or unpredictable.

For anything where I need tight control over what the agent can touch — especially in CI/CD pipelines, automated PR workflows, or anything touching production infrastructure — I'd use Claude Code. The explicit tool allowlist per subagent is the right model for those environments. The comparisons to OpenAI Codex and AI coding agents are instructive here too: the best tools for production use are the ones that make permissions explicit and auditable.

In practice, these tools aren't mutually exclusive. You might use Gemini CLI for exploratory analysis phases and Claude Code for the automated, permission-sensitive execution phases that follow.

Subagents are genuinely useful — they're not just a marketing story. But the implementation details matter more than the headline feature. Process-level vs API-level isolation, inherited vs explicit tool scopes, 1M vs 200K context: these aren't minor footnotes. They determine whether a subagent architecture is appropriate for your use case.

Gemini CLI's approach optimizes for scale and coverage. Claude Code's approach optimizes for control and auditability. Neither is universally better — but pretending they're equivalent because they both ship "subagents" would be doing yourself a disservice.

Read the architecture docs for whichever you're planning to use seriously. And test with real workloads before you commit — benchmarks in controlled environments don't tell you much about how these tools behave when the repo is messy and the tasks are ambiguous.

Comments

Sign in to join the discussion.

No comments yet. Be the first to share your thoughts.