100% Private • No tracking100% Client-Side•No data leaves your browser•No accounts•Works offline

100% Private • No tracking100% Client-Side•No data leaves your browser•No accounts•Works offline

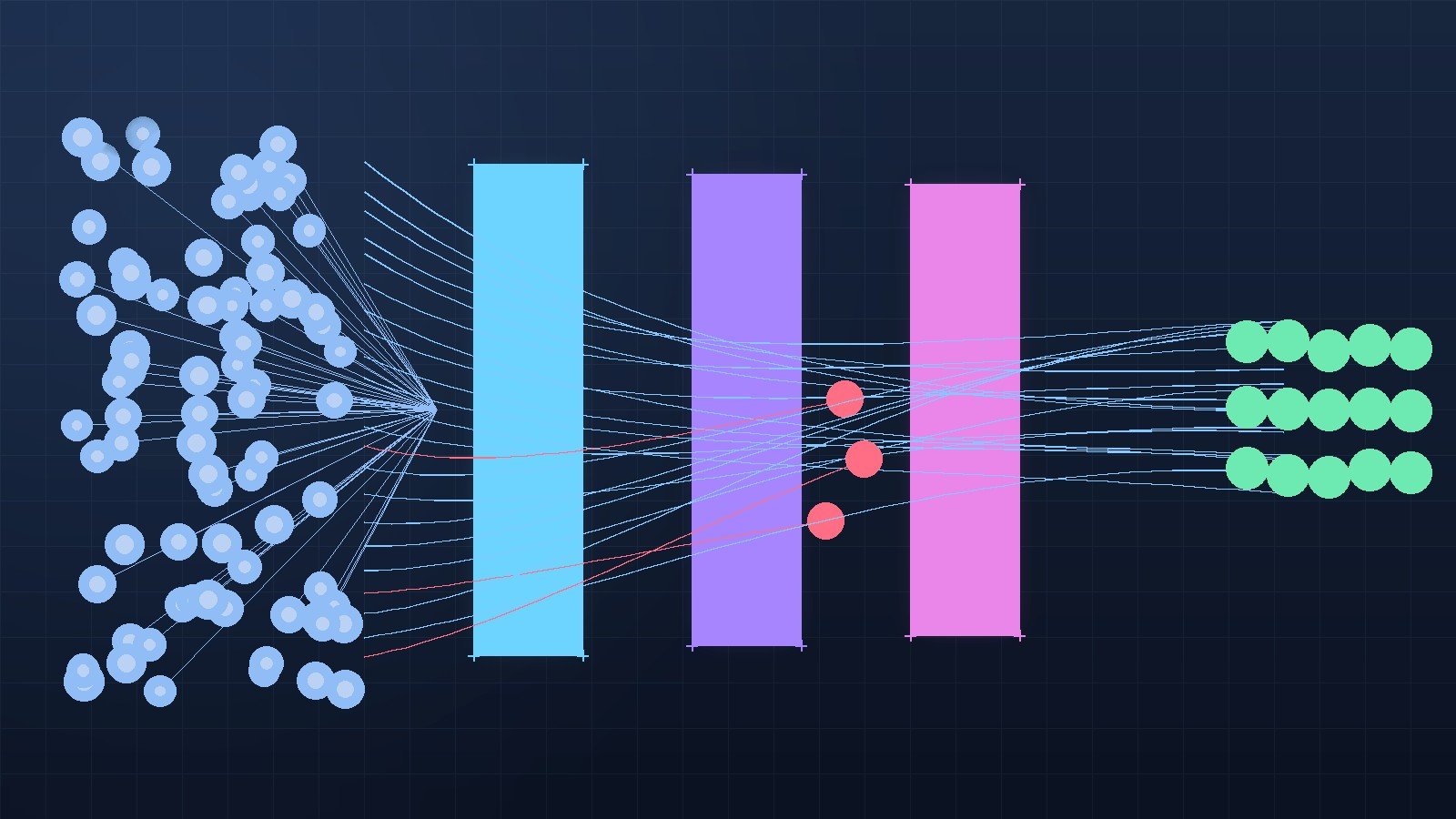

AI is generating more pull requests than humans can review. The fix isn't picking the best AI code review tool — it's combining the right ones.

A junior dev on my team merged a four-line bug fix last week. The PR was opened by an agent, summarized by an agent, reviewed by an agent, and approved by a human who clicked through in eleven seconds. The fix worked. It also added a redundant database call inside a render path that nobody noticed for three days.

That story is not unusual anymore. Pull request volume is up. Reviewer attention is not. AI code review is the only realistic answer, but the tools differ wildly in what they actually catch, and pretending they're interchangeable is how regressions ship to production. This is a practical look at where AI code review stands in April 2026, which tools earn their keep, and a workflow that doesn't fall apart when half your PRs are written by something that doesn't sleep.

CodeRabbit's 2026 analysis comparing roughly equivalent AI-generated and human-generated changes found AI code produces about 1.7x more issues per change, with the gap concentrated in unused code, error handling, and validation gaps. A separate Mining Software Repositories 2026 study found that 28% of AI-generated PRs merge near-instantly, but the rest enter an iterative loop where many get abandoned outright. Cloudflare reported that its review queue jumped sharply once Claude Code became standard internally, and Anthropic itself published a metric that explains the pressure cleanly: before they shipped their own review agent, 16% of internal PRs got substantive review comments. After, that number went to 54%.

The point isn't that AI writes worse code. The point is that AI writes a lot more code, and the parts humans were already bad at catching at scale (silent fallbacks, off-by-one in edge branches, accidental N+1 queries, tests that pass without exercising the change) are exactly the parts a tired senior reviewer skims over at 4 PM on a Friday. The review gap isn't a quality problem. It's a throughput problem with quality consequences.

There are now five tools serious enough to evaluate: Greptile, CodeRabbit, Cursor BugBot, GitHub Copilot code review, and Anthropic's Code Review for Claude Code. They are not solving the same problem.

| Tool | Catch rate (independent benchmarks) | False positives per run | Approach | Best at |

|---|---|---|---|---|

| Greptile | ~82% | ~11 | Whole-repo graph, parallel agents | Cross-file breaks, architectural drift |

| Cursor BugBot | ~80% resolution | Low (8-pass voting) | 8 parallel passes with shuffled diff order | Logic bugs, race conditions, edge cases |

| GitHub Copilot | ~54% | Low | PR-scoped, batch autofix | Style at scale, vulnerability autofix |

| CodeRabbit | ~44% | ~2 | PR-scoped, low-noise summaries | Validation gaps, fast feedback |

| Claude Code Review | New (March 2026) | Multi-agent verification | Severity-ranked agents | Logic errors with explanations |

A few facts worth keeping in your head. Greptile catches the most bugs because it indexes your whole repo and reasons about changes in context, but it is the noisiest of the five. Cursor BugBot launched in July 2025 and added cloud-VM autofix in February 2026; its trick is running eight parallel review passes with the diff in different orders so that a single bad sample doesn't get the last word. CodeRabbit is the cleanest signal-to-noise but misses more than half of real bugs in benchmarks, which matters if your only line of defense is its comments. GitHub's batch autofix, shipped in March 2026, lets you resolve a class of issues with one click instead of approving twenty identical suggestions. Anthropic's Code Review, launched March 9, 2026, runs a team of agents in parallel with a verification pass to filter false positives, and lands one high-signal summary plus inline comments; it costs roughly $15 to $25 per review at current token prices.

That price point is a real consideration. A team merging two thousand PRs a month at $20 each is paying $40,000 a month for review alone. BugBot at $40 per contributor per month is cheaper for small teams and brutal for large ones, since every contributor on the repo needs a seat. There is no free option that catches what these catch.

Greptile's high catch rate hides a problem teams discover three weeks in: when reviewers see eleven comments and two of them are right, they start ignoring all eleven. The tool has the highest absolute bug detection but produces what behavioral economists would call alarm fatigue. You need triage on top of it.

CodeRabbit's brevity is the other failure mode. A two-comment review on a 600-line PR feels reassuring and is, statistically, telling you nothing. If your only signal is "CodeRabbit had no concerns," you have not been reviewed. You have been told the easy stuff is fine.

Cursor BugBot's 8-pass voting is the most novel design and the most expensive at scale. It also intentionally ignores style and formatting, which means if your team relies on a single tool to also enforce conventions, BugBot is the wrong choice. Pair it with a linter and a separate doc-style pass.

Copilot's autofix is genuinely good for vulnerability classes covered by GitHub Advanced Security, but its general code-review feedback is shallower than the dedicated tools. It is a fine baseline if you already pay for Copilot. It is not a strategy.

Claude Code Review is the most opinionated of the bunch and the newest, which means we don't yet have multi-month data on regression rates. The architecture is sound: dispatch agents, verify findings, rank severity. The risk is the cost curve. If you let it loose on a monorepo with thousand-line PRs, you will notice it on your bill before you notice it in your incident count.

Picking one tool and walking away is the mistake. The teams I see surviving 2026 are running layered review, with each layer doing one job and refusing the others. Here is the shape that holds up.

The first layer is deterministic and free. Linting, formatting, type checking, secrets scanning, and SAST run in CI on every PR before any AI sees the change. This is non-negotiable, and AI review tools are explicitly worse at these jobs than dedicated tools. If your team treats AI review as a substitute for eslint --max-warnings=0 and tsc --noEmit, you are paying tokens for work a free tool already did.

The second layer is the AI bug-catcher. Pick one of Greptile, BugBot, or Claude Code Review based on your codebase. Greptile if you have a large monorepo with cross-file dependencies that confuse diff-only tools. BugBot if you live in Cursor already and want the parallel-pass voting model. Claude Code Review if you're an Anthropic shop and your concern is logic errors with explanations a junior engineer can act on. Don't run two of these at once unless you enjoy reading the same comment three times.

The third layer is human review, but with a narrower job. Reviewers stop checking for bugs the AI already checked for. They check architecture, intent, scope creep, and "should this code exist at all." This is the only reasonable human use of attention now. A senior engineer reading a 400-line PR and trying to find a null deref is throwing money away.

The fourth layer is the merge gate. AI review comments do not block merges by default. They surface to the author, who has to acknowledge or fix them. Blocking on AI signal turns the bot into a tax collector and starts the cycle of devs working around it. Anthropic's data on resolution rates only works because suggestions feel cheap to address.

For agent-authored PRs specifically, two extra controls matter, and they are exactly the ones most teams skip. The agent's GitHub identity should be a separate bot user with the minimum scopes it needs. Branch protection should require a human approval before merge regardless of how good the AI review looked. A solid breakdown of agent permissions on GitHub lives in GitHub CLI agent skills and the security gaps you can't ignore, and the pattern there generalizes to any tool with write access to your repos.

Trusting the catch rate as a single number. Greptile's 82% is real, but it's measured on benchmarks, not your codebase. The first month of any AI review tool is calibration. Treat the catch rate as an upper bound, not a guarantee.

Letting the AI review the agent's own code. If you use Claude Code to write the PR and Claude Code Review to review it, you have one model checking its own work. The same blind spots persist. The fix is cheap: the writer and the reviewer should be different model families. A PR written by Claude reviewed by Greptile is a real second opinion. A PR written by Claude reviewed by Claude is theater.

Skipping tests because the AI says the change is safe. AI review evaluates the code in the diff. It does not evaluate whether the test you didn't write would have caught the bug it didn't see. New code still needs new tests. If anything, AI-authored PRs need stricter test coverage rules because the AI is faster than your test culture is mature. The patterns in Unit testing React components: a comprehensive guide apply doubly to AI-generated changes.

Reviewing the AI review. Some teams have humans reading every AI comment to decide whether it's worth surfacing to the author. This defeats the entire point. Configure the tool's confidence threshold once, trust the filter, and let the author triage. If the false positive rate is bad enough to require a human filter, you picked the wrong tool.

Forgetting that AI agents can be wrong about why a change is safe. The most dangerous comment an AI review can leave is "no issues found" on a PR that touches a security boundary. Set up a separate path for changes touching auth, payments, PII, and SQL: those go straight to a human, regardless of what the bot said. This is the same instinct behind the safety architecture in the MCP server guide for AI agent workflows, and it applies anywhere you've connected an autonomous agent to write actions.

If I were standing up AI code review at a 30-engineer team today, I'd start with one paid tool, not three. I'd pick Cursor BugBot if the team already lives in Cursor, Claude Code Review if the team uses Claude Code as the primary writing agent (with another model doing the review pass), or Greptile if the codebase is large enough that cross-file context is the dominant pain. I'd skip CodeRabbit unless we needed multi-platform support across GitHub, GitLab, and Bitbucket, where it's the only serious option.

I'd run it for two weeks before tuning anything. I'd track three numbers: false positive rate measured by author dismissals, real bugs caught measured by post-merge revert correlations, and review latency. If the false positive rate is above 30% at week two, I'd lower the confidence threshold or switch tools. If real bugs caught is under one a week, the tool is decorative.

I'd require every agent-authored PR to have a human approval, full stop, even if the AI review is clean. That rule pays for itself the first time the agent confidently writes a wrong migration. The broader argument for human-in-the-loop on coding agents is in the OpenAI Codex app and AI coding agents complete guide, and the same logic applies whether the agent is Codex, Claude Code, Cursor, or whatever ships next month.

AI code review is past the demo phase. The good tools are catching real bugs, the prices are landing in the range where mid-size teams can afford them, and the integration story is mature enough that you can have something running before lunch. None of that means you can fire the senior reviewer. It means the senior reviewer's job changed: less hunting for nulls, more guarding the architecture and the boundaries the AI doesn't know matter.

The teams getting this right in 2026 treat AI review as a power tool, not an oracle. They run it on every PR. They tune it for their codebase. They keep humans on the calls that involve judgment. And they accept that the new bottleneck isn't writing code or even reviewing code. It's deciding what code is worth writing in the first place, which is a problem no agent has solved yet.

If you're still doing review the way you did in 2023, AI hasn't broken your team yet. Volume will. Pick a tool this week.

Comments

Sign in to join the discussion.

No comments yet. Be the first to share your thoughts.